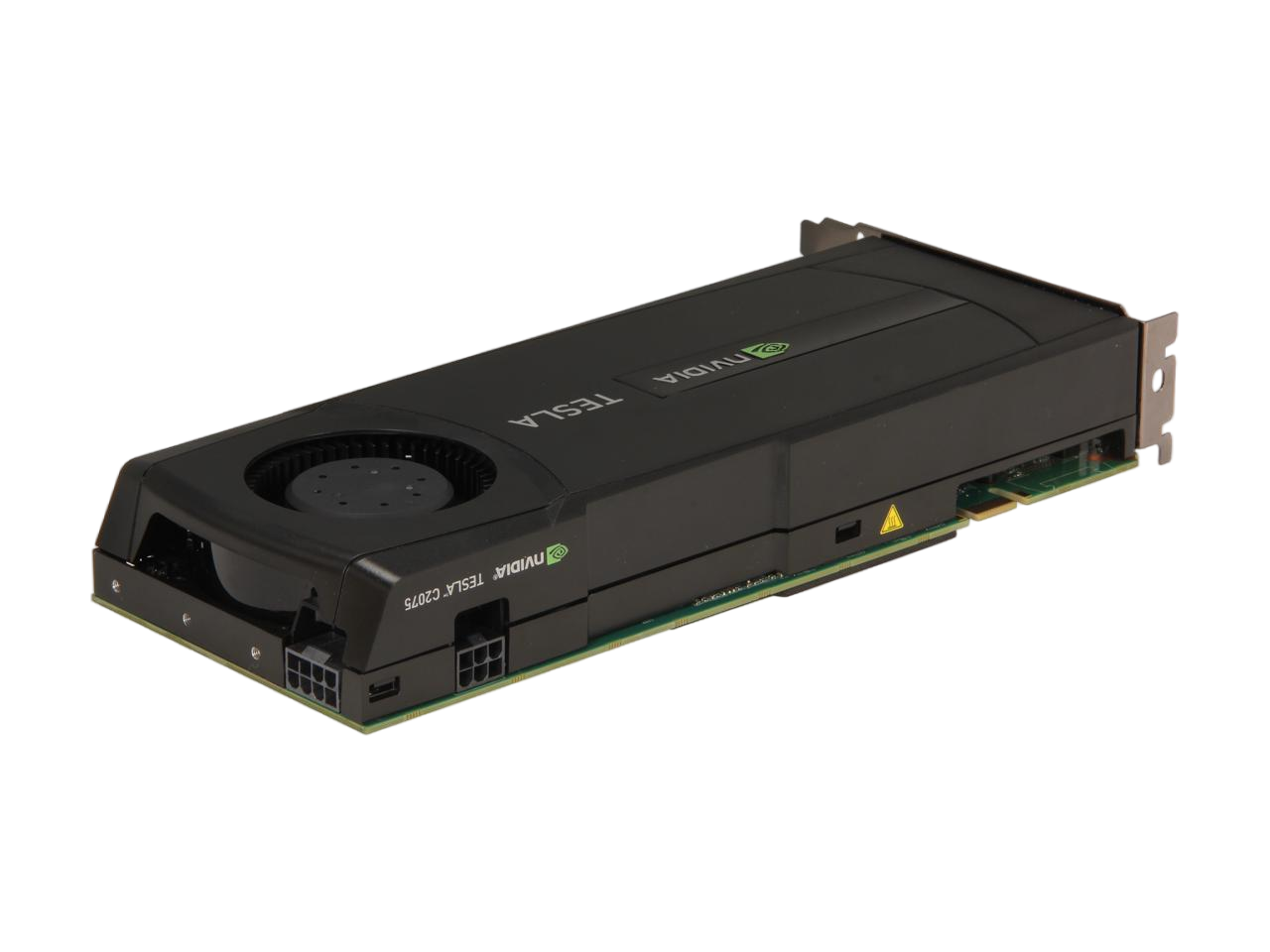

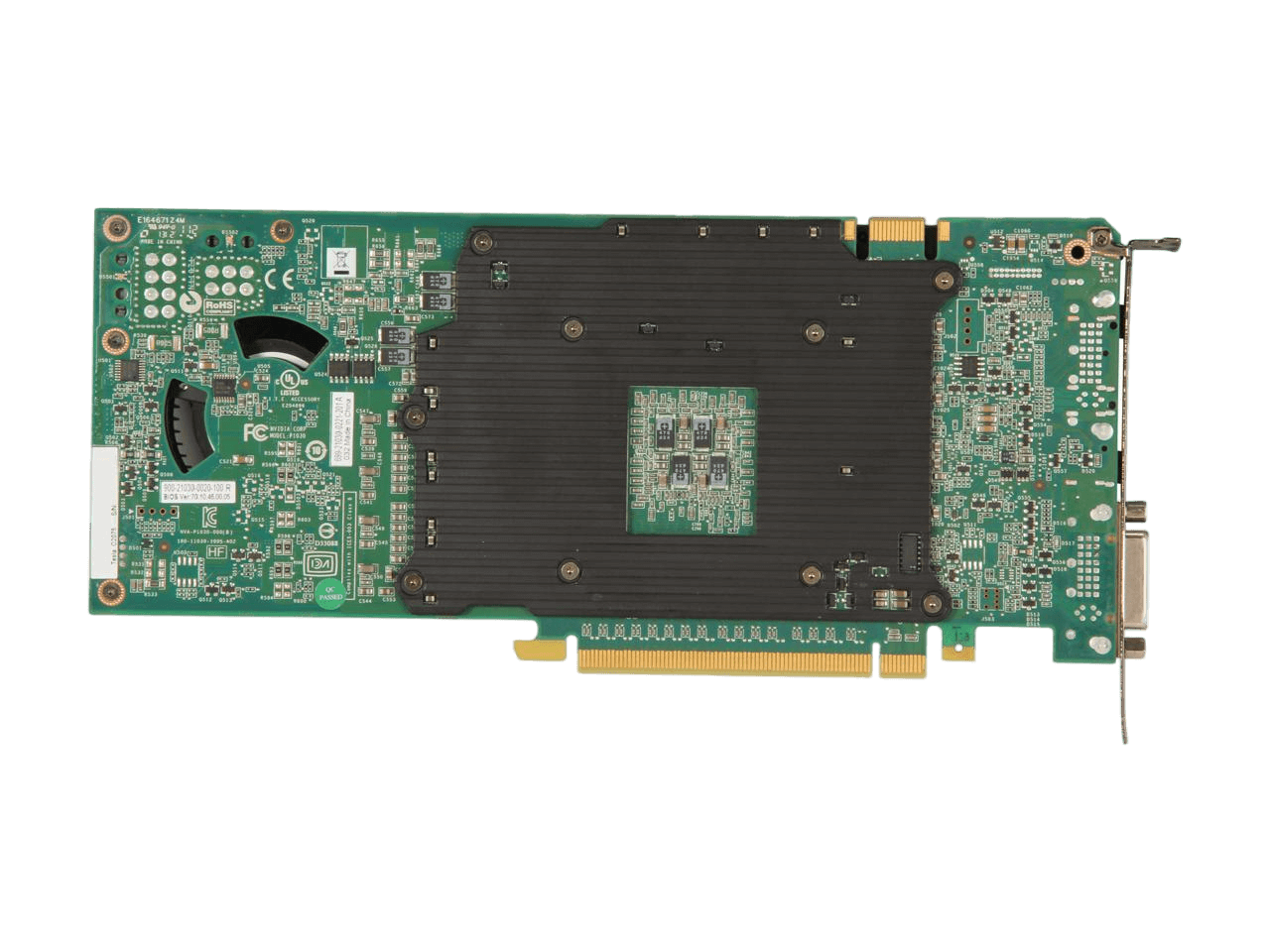

NVIDIA TESLA Tesla C2075 900-21030-0020-100 6GB 384-bit GDDR5 PCI Express 2.0 x16 Workstation Video Card

SKU:

7GC1231

Availability:

In Stock

Free shipping

$645.00

Secure checkout with:

Brand:

NVIDIA

Overview

| Brand | NVIDIA |

|---|---|

| Series | TESLA |

| Model | 900-21030-0020-100 |

| Interface | PCI Express 2.0 x16 |

|---|

| Chipset Manufacturer | NVIDIA |

|---|---|

| GPU | Tesla C2075 |

| CUDA Cores | 448 |

| Memory Size | 6GB |

|---|---|

| Memory Interface | 384-bit |

| Memory Type | GDDR5 |

| DVI | 1 |

|---|

| Digital Resolution | 1600x1200 |

|---|---|

| Cooler | With Fan |

| Dual-Link DVI Supported | Yes |

| Operating Systems Supported | Windows, Linux |

| Features | Tesla C2075 delivers up to 515 Gigaflops of double-precision peak performance in each GPU, enabling a single workstation to deliver a Teraflop or more of performance. Maximizes performance and reduces data transfers by keeping larger data sets in local memory that is attached directly to the GPU. Meets a critical requirement for computing accuracy and reliability for workstations. Offers protection of data in memory to enhance data integrity and reliability for applications. Register files, L1/L2 caches, shared memory, and DRAM all are ECC protected. Accelerates algorithms such as physics solvers, ray-tracing, and sparse matrix multiplication where data addresses are not known beforehand. This includes a configurable L1 cache per Streaming Multiprocessor block and a unified L2 cache for all of the processor cores. Maximizes the throughput by faster context switching that is 10X faster than previous architecture, concurrent kernel execution, and improved thread block scheduling. Turbocharges system performance by transferring data over the PCIe bus while the computing cores are crunching other data. Even applications with heavy data-transfer requirements, such as seismic processing, can maximize the computing efficiency by transferring data to local memory before it is needed Choose C, C++, OpenCL, DirectCompute, or Fortran to express application parallelism and take advantage of the "Fermi" GPU's innovative architecture. NVIDIA Parallel Nsight GPU debugging tool is available for Microsoft Visual Studio developers. Maximizes bandwidth between the host system and the Tesla processors. Enables Tesla products to work with virtually any PCIe-compliant host system with an open PCIe x16 slot. |

|---|